Gesture-Controlled Robot Arm

Objective Summary

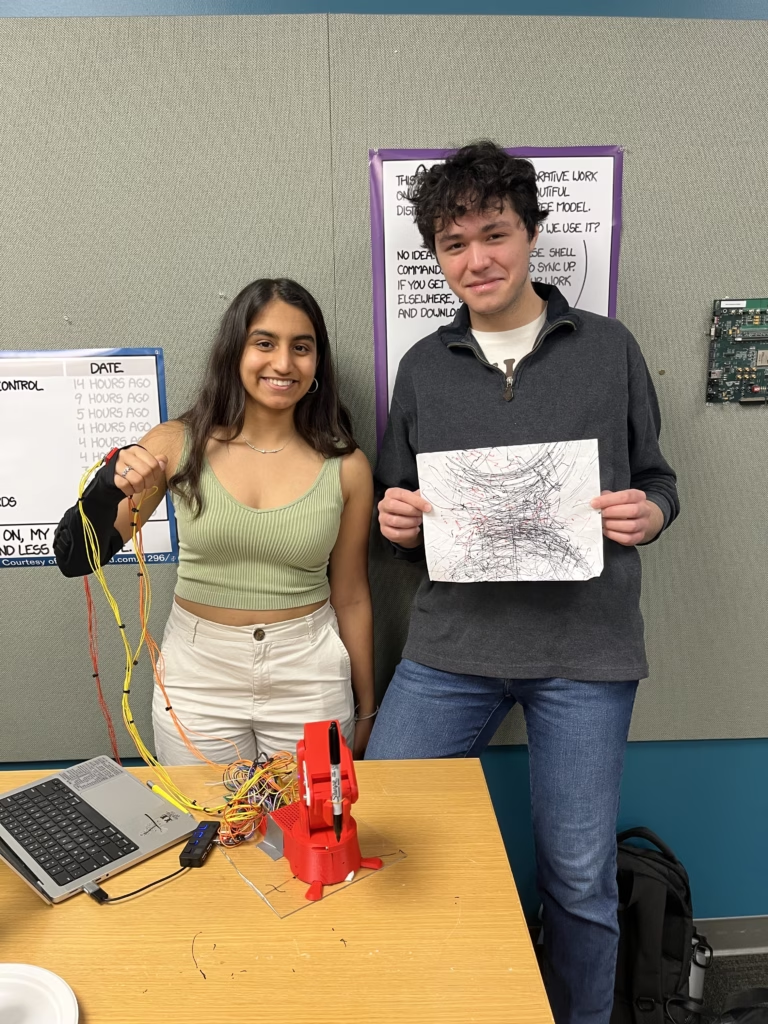

For the final project for an embedded systems class, a friend and I built a gesture-controlled robot arm.

Further Background

At Stanford University, CS 107e is an infamous course. It is a spinoff of the course CS 107, which is the intro systems course required for all CS majors. 107e is different from 107 because it is more selective, has a more-hands-on focus, and generally attracts a crazier breed of student.

Throught 107e, each student programs an entire computer from scratch in C, taking a Mango Pi Risc-V bare metal board and turning it into a fully functional computer with a custom graphics library, keyboard driver, shell, and more, even implementing functions such as printf() and malloc() from scratch with no libraries. This is all done in 8 weeks. For the final 2 weeks of the course, partners are matched to build a final project of their choosing that uses the skills learned in the course. My partner (Aditri Patil) and I decided to build a gesture-controlled robot arm. Two weeks is not enough time to build a fully-flushed out functional product, so we treated it more as a proof-of-concept challenge.

Process

The general idea for this arm is for the user to wear a sleeve of IMUs on their arm; there is an IMU on the back of the hand, forearm, and upper arm. The angle of IMUs with respect to each other corresponds to the bend angle of the servos of the robot arm. Thus, the robot arm matches the real time movements of the user’s arm; almost like an imitating twin.

There are ways to fancify this, such as using a Kalman filter, but that was beyond the scope of this project.

Since this was a software-focused class, we focused on the software. The STL files for the 3D printed arm were simply found from the internet: https://www.printables.com/model/818975-compact-robot-arm-arduino-3d-printed

The software challenges for this project were multifaceted and complex. Since this was a systems based class with no pre-given libraries, we had to implement each library/module entirely from scratch.

We wrote a custom PWM (pulse width modulation) library to control servo movement, a custom IMU (inertial measurement unit) library to read accelerometer and gyroscope data from the IMUs, and a custom I2C (inter-integrated circuit) library for communication. Finally, we went through a bit of integration hell to combine all this together. We spent several all-nighters in the lab debugging all of our issues, but the following video shows our results:

Obviously, it is not the smoothest robot arm ever, but that is not the intention. From a systems level, we implemented everything well, and we are even able to draw abstract art with a sharpie!